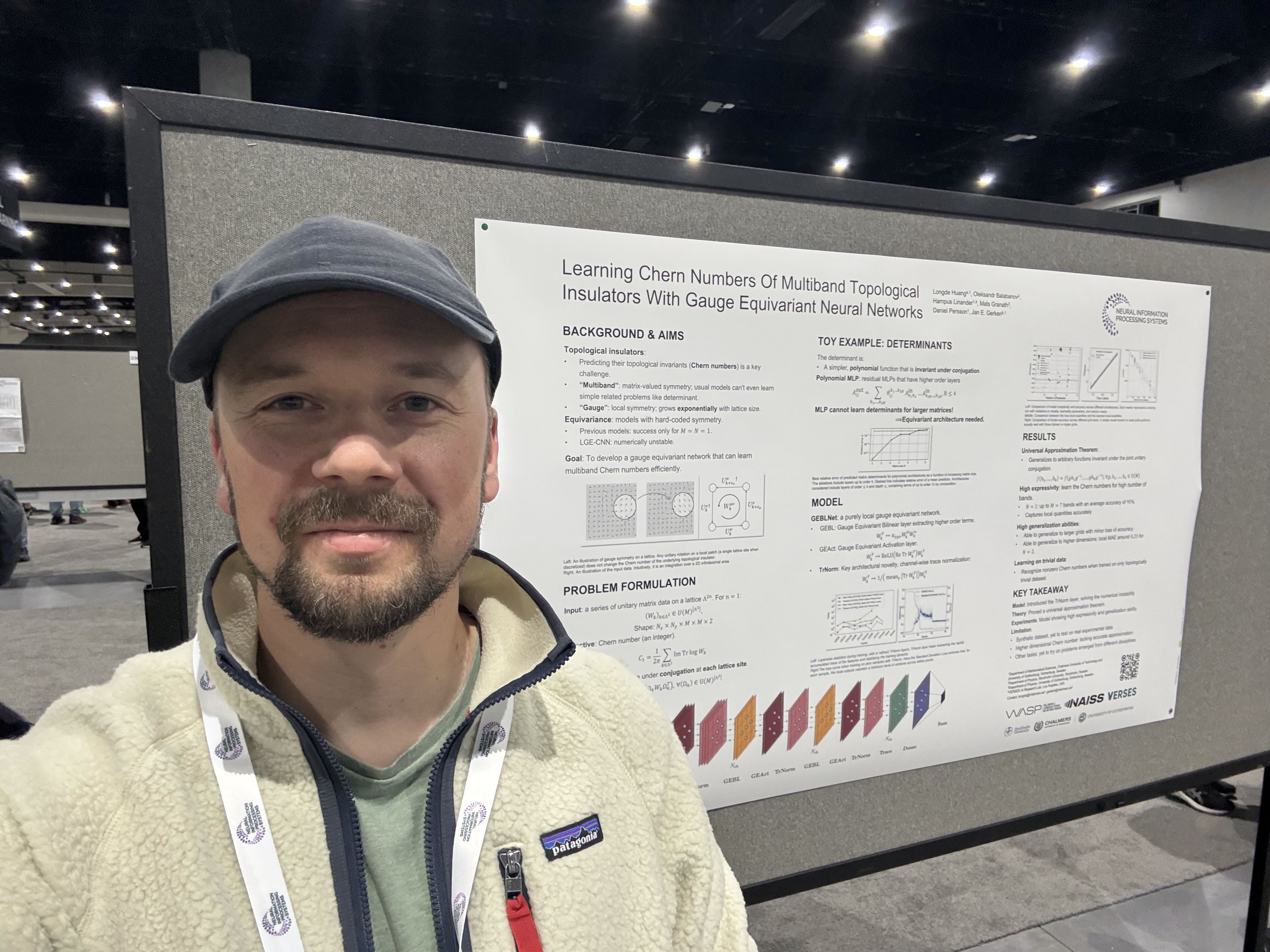

Jimmy Aronsson

PhD student 2018-2023

About me

From 2018 until 2023, I was Daniel Persson’s PhD student. I was a member of the Wallenberg AI, Autonomous Systems and Software Program (WASP) graduate school as well.

I wrote my PhD thesis on the mathematical foundations of equivariant neural networks.

Publications

Geometric deep learning and equivariant neural networks #

Jan E. Gerken, Jimmy Aronsson, Oscar Carlsson, Hampus Linander, Fredrik Ohlsson, Christoffer Petersson, Daniel Persson

We survey the mathematical foundations of geometric deep learning, focusing on group equivariant and gauge equivariant neural networks. We develop gauge equivariant convolutional neural networks on arbitrary manifolds \(\mathcal{M}\) using principal bundles with structure group K and equivariant maps between sections of associated vector bundles. We also discuss group equivariant neural networks for homogeneous spaces \(\mathcal {M}=G/K\), which are instead equivariant with respect to the global symmetry \(G\) on \(\mathcal {M}\). Group equivariant layers can be interpreted as intertwiners between induced representations of \(G\), and we show their relation to gauge equivariant convolutional layers. We analyze several applications of this formalism, including semantic segmentation and object detection networks. We also discuss the case of spherical networks in great detail, corresponding to the case \(\mathcal {M}=S^2=\textrm{SO}(3)/\textrm{SO}(2)\). Here we emphasize the use of Fourier analysis involving Wigner matrices, spherical harmonics and Clebsch-Gordan coefficients for \(G=\textrm{SO}(3)\), illustrating the power of representation theory for deep learning.

Geometrical aspects of lattice gauge equivariant convolutional neural networks #

Jimmy Aronsson, David I. Müller, Daniel Schuh

Lattice gauge equivariant convolutional neural networks (L-CNNs) are a framework for convolutional neural networks that can be applied to non-Abelian lattice gauge theories without violating gauge symmetry. We demonstrate how L-CNNs can be equipped with global group equivariance. This allows us to extend the formulation to be equivariant not just under translations but under global lattice symmetries such as rotations and reflections. Additionally, we provide a geometric formulation of L-CNNs and show how convolutions in L-CNNs arise as a special case of gauge equivariant neural networks on SU(N) principal bundles.

Homogeneous vector bundles and G-equivariant convolutional neural networks #

Jimmy Aronsson

\(G\)-equivariant convolutional neural networks (GCNNs) is a geometric deep learning model for data defined on a homogeneous \(G\)-space \(\mathcal{M}\). GCNNs are designed to respect the global symmetry in \(\mathcal{M}\), thereby facilitating learning. In this paper, we analyze GCNNs on homogeneous spaces \(\mathcal{M} = G/K\) in the case of unimodular Lie groups \(G\) and compact subgroups \(K \leq G\). We demonstrate that homogeneous vector bundles is the natural setting for GCNNs. We also use reproducing kernel Hilbert spaces to obtain a precise criterion for expressing \(G\)-equivariant layers as convolutional layers. This criterion is then rephrased as a bandwidth criterion, leading to even stronger results for some groups.