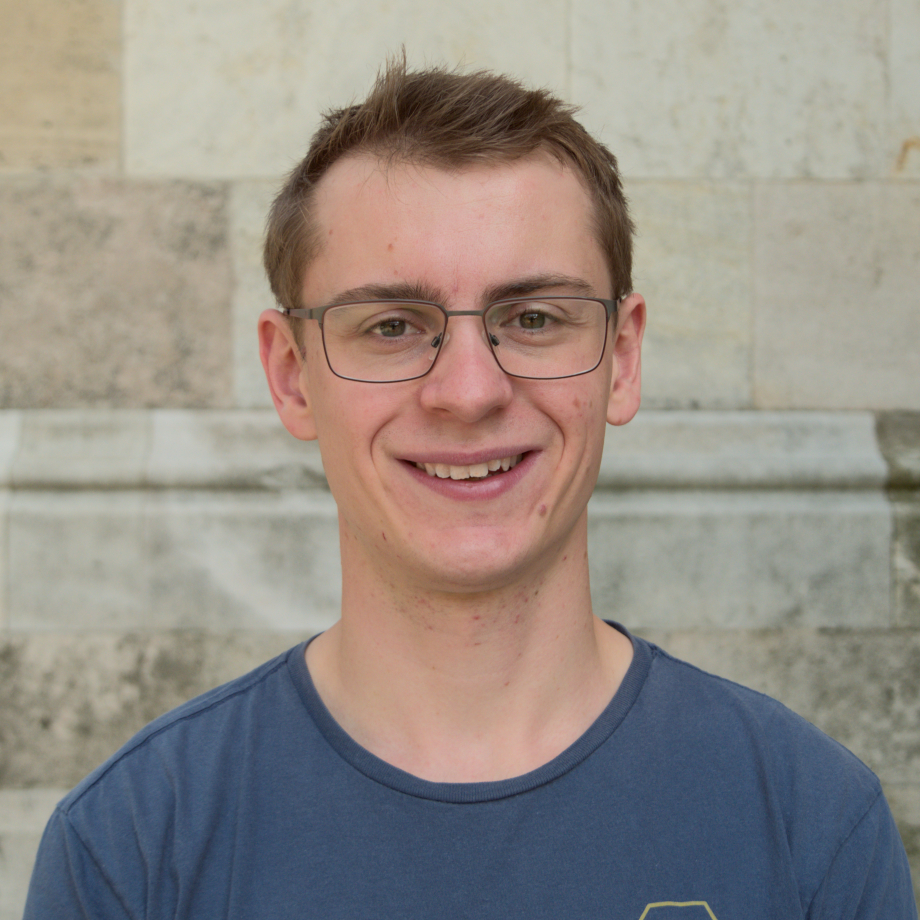

Philipp Misof

PhD student

About me

As part of Jan Gerken’s group, my research lies in the area of mathematical foundations of deep learning. I’m particularly interested in geometric descriptions of neural networks (NNs), e.g. group-equivariant NNs, allowing for better-tailored algorithms, as well as in the regime of wide neural networks that is governed by the neural tangent kernel. Owing to my physics background, my work is influenced by symmetry-centered approaches typically found in mathematical physics and quantum field theory. Possible applications in condensed matter physics and bioinformatics are also part of my interests. My research is supported by the Wallenberg AI, Autonomous Systems and Software Program (WASP).

Internship at Genentech

Currently, I’m complementing my education with a 10-months internship at Genentech (Roche) in Switzerland under the supervision of Pan Kessel. In this project, I am investigating new ways for generative protein design.

Education

MSc in Physics at the University of Vienna, Austria (2023)

Thesis: Neural network potentials with long-range charge transfer and electrostatics

Supervised by: Prof. Christoph Dellago, Dr. Andreas Tröster

Publications

Finite-Width Neural Tangent Kernels from Feynman Diagrams #

Max Guillen, Philipp Misof, Jan E. Gerken

Neural tangent kernels (NTKs) are a powerful tool for analyzing deep, non-linear neural networks. In the infinite-width limit, NTKs can easily be computed for most common architectures, yielding full analytic control over the training dynamics. However, at infinite width, important properties of training such as NTK evolution or feature learning are absent. Nevertheless, finite width effects can be included by computing corrections to the Gaussian statistics at infinite width. We introduce Feynman diagrams for computing finite-width corrections to NTK statistics. These dramatically simplify the necessary algebraic manipulations and enable the computation of layer-wise recursive relations for arbitrary statistics involving preactivations, NTKs and certain higher-derivative tensors (dNTK and ddNTK) required to predict the training dynamics at leading order. We demonstrate the feasibility of our framework by extending stability results for deep networks from preactivations to NTKs and proving the absence of finite-width corrections for scale-invariant nonlinearities such as ReLU on the diagonal of the Gram matrix of the NTK. We validate our results with numerical experiments.

Equivariant Neural Tangent Kernels #

Philipp Misof, Pan Kessel, Jan E. Gerken

Equivariant neural networks have in recent years become an important technique for guiding architecture selection for neural networks with many applications in domains ranging from medical image analysis to quantum chemistry. In particular, as the most general linear equivariant layers with respect to the regular representation, group convolutions have been highly impactful in numerous applications. Although equivariant architectures have been studied extensively, much less is known about the training dynamics of equivariant neural networks. Concurrently, neural tangent kernels (NTKs) have emerged as a powerful tool to analytically understand the training dynamics of wide neural networks. In this work, we combine these two fields for the first time by giving explicit expressions for NTKs of group convolutional neural networks. In numerical experiments, we demonstrate superior performance for equivariant NTKs over non-equivariant NTKs on a classification task for medical images.

Iterative charge equilibration for fourth-generation high-dimensional neural network potentials #

Emir Kocer, Andreas Singraber, Jonas A. Finkler, Philipp Misof, Tsz Wai Ko, Christoph Dellago, Jörg Behler

Machine learning potentials allow performing large-scale molecular dynamics

simulations with about the same accuracy as electronic structure calculations,

provided that the selected model is able to capture the relevant physics of the

system. For systems exhibiting long-range charge transfer, fourth-generation

machine learning potentials need to be used, which take global information

about the system and electrostatic interactions into account. This can be

achieved in a charge equilibration step, but the direct solution of the set of

linear equations results in an unfavorable cubic scaling with system size,

making this step computationally demanding for large systems. In this work, we

propose an alternative approach that is based on the iterative solution of the

charge equilibration problem (iQEq) to determine the atomic partial charges. We

have implemented the iQEq method, which scales quadratically with system size,

in the parallel molecular dynamics software LAMMPS for the example of a

fourth-generation high-dimensional neural network potential (4G-HDNNP) intended

to be used in combination with the

n2p2 library. The method itself

is general and applicable to many different types of fourth-generation MLPs.

An assessment of the accuracy and the efficiency is presented for a benchmark

system of FeCl\(_3\) in water.

Talks

The Neural Tangent Kernel: Equivariance, Data Augmentation and Corrections from Feynman Diagrams #

Philipp Misof

In this talk, I will summarize my first two years of research in my PhD. Starting with an introduction to the Neural Tangent Kernel, I will later explain how we extended this to the domain of equivariant architectures and how this can be used to derive a connection between manifest equivariance and data-augmentation. The second part of the talk focuses on our most recent project: deriving finite-width corrections to the NTK by means of Feynman diagrams.

Equivariant Neural Tangent Kernels - Connecting data augmentation and equivariant architectures #

Philipp Misof

In recent years, the neural tangent kernel (NTK) has proven to be a valuable tool to study training dynamics of neural networks (NN) analytically. In this talk, I will present how this NTK framework can be extended to equivariant NNs based on group convolutional NNs (GCNNs). Not only does this enable the analytic study of influences of hyperparameters, training biases etc. in equivariant NNs, but it also allows us to draw an interesting connection between data augmentation and manifestly equivariant architectures. In particular, we show that the mean predictions of an ensemble of data augmented non-equivariant networks coincide with the mean predictions of an ensemble of specific GCNNs at all training times in the infinite-width limit. We further provide explicit implementations of the equivariant NTK for roto-translations in the plane (\(G = C_{n}\ltimes\mathbb{R}^{2}\)) and 3d rotations (\(G=\mathrm{SO}(3)\)). To evaluate the performance of the equivariant infinite width solution, we benchmark the models on quantum mechanical property prediction and medical image classification.

Equivariant Neural Tangent Kernels #

Philipp Misof

In recent years, the neural tangent kernel (NTK) has proven to be a valuable tool to study training dynamics of neural networks (NN) analytically. In this talk, I will present how this NTK framework can be extended to equivariant NNs based on group convolutional NNs (GCNNs). Not only does this enable the analytic study of influences of hyperparameters, training biases etc. in equivariant NNs, but it also allows us to draw an interesting connection between data augmentation and manifestly equivariant architectures. In particular, we show that the mean predictions of an ensemble of data augmented non-equivariant networks coincide with the mean predictions of an ensemble of specific GCNNs at all training times in the infinite-width limit. We further provide explicit implementations of the equivariant NTK for roto-translations in the plane and 3d rotations. To evaluate the performance of the equivariant infinite width solution, we benchmark the models on quantum mechanical property prediction and medical image classification. This talk is based on joined work with Jan Gerken and Pan Kessel.

Equivariant Neural Tangent Kernels #

Philipp Misof

In this talk I will present a compact overview of our recent work, where we extend the recent theory of the Neural Tangent Kernel (NTK) to equivariant neural networks. The NTK has been proven to be a useful analytical tool in the study of the training dynamics of wide NNs. Investigating the properties of the NTK associated to Group Convolutional NNs (GCNNs) allows us to draw a connection between data augmentation and manifestly equivariant architectures. In particular, we show that the mean predictions of an ensemble of data augmented non-equivariant networks coincide with the mean predictions of an ensemble of specific GCNNs at all training times in the infinite-width limit. This correspondence is numerically verified to also hold at finite width. We further show that the performance boost of \(\mathrm{SO}(3)\)-invariant architectures compared to non-equivariant counterparts in quantum mechanical property prediction extends to the infinite width limit.

Neural Tangent Kernel for Equivariant Neural Networks #

Philipp Misof

The neural tangent kernel (NTK) is a quantity closely related to the training dynamics of neural networks (NNs). It becomes particularly interesting in the infinite width limit of NNs, where this kernel becomes deterministic and time-independent, allowing for an analytical solution of the gradient descent dynamics under the mean squared error loss, resulting in a Gaussian process behaviour. In this talk, we will first introduce the NTK and its properties, and then discuss how it can be extended to NNs equivariant with respect to the regular representation. In analogy to the forward equation of the NTK of conventional NNs, we will present a recursive relation connecting the NTK to the corresponding kernel of the previous layer in an equivariant NN. As a concrete example, we provide explicit expressions for the symmetry group of 90° rotations and translations in the plane, as well as Fourier-space expressions for \(SO(3)\) acting on spherical signals. We support our theoretical findings with numerical experiments.